The U.S. Department of Education’s Draft Gainful Employment Survey Appeal Standards

By Yolanda R. Gallegos* Gallegos Legal Group

*Special thanks to Marc LoGrasso, Ph.D. for assistance interpreting statistical data

“When I looked in her eyes, they were blue but nobody home.” – Scary Monsters (And Super Creeps), David Bowie (1980)

When the government creates a process to challenge the integrity of its decisions that is founded on arbitrary and fallacious metrics, it creates only the illusion of fairness.

The White House Office of Information and Regulatory Review (OIRA) is well into the process of reviewing the U.S. Department of Education’s (ED’s) gainful employment earnings survey standards under the Paperwork Reduction Act of 1995 (44 U.S.C. §§ 3501 et seq.). These standards impose seemingly arbitrary quantum and proportionality thresholds, unreasonably reject earnings data when respondents do not demographically mirror the graduate cohort, and prohibit institutional adjustment for missing earnings data while permitting statistically invalid adjustment for ED’s SSA earnings data.

Specifically, ED’s National Center for Education Statistics (NCES) has developed the Recent Graduates Employment and Earnings Survey (RGEES) Standards to be used as an alternative earnings appeal for educational institutions challenging the earnings data produced by the Social Security Administration (SSA) in calculating gainful employment (GE) debt to earnings (DE) rates pursuant to provisions in 34 C.F.R. Parts 600 & 668. To date, ED has twice solicited public comments to the RGEES standards and OIRA is currently reviewing them. ED is expected to publish the final standards very soon. The first solicitation of comments yielded a total of six (6) comments and the second solicitation yielded three (3), one of which was essentially an advertisement for placement verification services. In contrast, over 90,000 members of the public commented on the first iteration of the gainful employment regulations and 95,000 commented on the second 2014 version.

Effectively, these RGEES standards have flown below the radar of those who will be subjected to them. This article will provide readers with an overview of the RGEES Standards as published before the last comment period expired. Because OIRA review is pending, the Standards may change before ED issues them in final form. Unfortunately, given the dearth of public attention to the Standards, it is likely that they will not vary significantly.

Earnings Survey Appeals: Why Should you Care?

Acknowledged flaws in SSA earnings data

Because the SSA earnings data suffer from well-known flaws, both of the alternative earnings appeals – the earnings survey appeal and the state-sponsored data appeal – play a vital role in ensuring the integrity and reliability of the earnings data used in the GE DE metrics. The flaws can be summarized as follows:

- In calculating earnings for the DE rates, SSA imputes zero values to graduates in GE cohorts in cases where SSA’s Master Earnings File (MEF) has no earnings data on the student. ED asserts that this practice does not skew the data but the practice is contrary to accepted statistical methods.

- There are several common circumstances where SSA will have no earnings data for an individual in its MEF in any particular tax year despite the fact the individual did have earnings (e.g., erroneous W2, misreported and underreported self employment income, etc.). In fact, ED admits that of the over 8 million earnings files SSA transferred to ED in 2011-12 for the GE informational rates and the White House Scorecard, SSA imputed zero earnings to 11.4 percent of surveyed graduates. 79 Fed. Reg. 64,890, 64954 (Oct. 31, 2014).

In fact, ED has conceded that the SSA data have “shortcomings” and “perceived flaws,” and it relies on the alternative state earnings and RGEES appeals to correct these issues. 79 Fed. Reg. at 64,956-57.

Earnings survey appeals only earnings appeal method available to all institutions

While ED presents the alternative earnings appeals as the answer to the “shortcomings” to the SSA earnings data, the state-sponsored data appeal, is not available in all states. 79 Fed. Reg. at 16,459. ED has expressly acknowledged that state-sponsored income data are not accessible to institutions in all states and even where they are, such data for students who migrate out of state may be unattainable. Moreover, state earnings data do not cover all employees. 79 Fed. Reg. 16,426, 16,459 (March 25, 2014); see also 76 Fed. Reg. 34,386, 34,428 (June 13, 2011); 79 Fed. Reg. at 64,962-63 (“As one commenter noted, and as described in more detail in the NPRM, we believe that there are limitations of State earnings data, notably relating to accessibility and the lack of uniformity in data collected on a State-by-State basis”) (emphasis added). Given these state earnings data deficits, RGEES becomes the only viable option for many institutions to correct the inherently unreliable SSA earnings data.

As the sole and last check for earnings data integrity available to all institutions in all states, the importance of the existence of a fair and balanced RGEES appeals process is enhanced. It is questionable, however, that ED’s proposed RGEES Standards meet this standard.

ED’S required 50 percent response rate

The RGEES Standards will reject any earnings survey that does not produce the responses of at least 50 percent of an institution’s graduates in its program’s cohort. ED has been unclear and inconsistent in explaining the basis for this 50 percent standard. In response to the first round of comments, ED suggested that the Office of Management and Budget’s “Guidance on Agency Survey and Statistical Information Collections” [hereafter “OMB Guidance”] mandates this response rate, but this Guidance does not apply to educational institutions and, even if it did, the OMB Guidance does not require a 50 percent rate of response.

The OMB Guidance applies to information collection carried out by federal agencies, not educational institutions challenging federal agency data, as is the case here. OMB Guidance at 1 (“This guidance is designed to assist agencies and their contractors in preparing Information Collection Requests…for surveys”). In fact, the entire Paperwork Reduction Act (PRA) applies only to “federal agenc[ies]” to restrict their ability to impose unnecessarily burdensome paperwork requirements on the public; it does not apply to private entities to increase their obligations when reporting data to a federal agency. See 44 U.S.C. § 3506 (listing PRA responsibilities of federal agencies). Accordingly, while the PRA was enacted to ensure that ED does not impose excessive burdens on educational institutions via the RGEES Standards, it does not impose the statistical standards applicable to federal agencies on private educational institutions. 44 U.S.C. § 3501(1) (“The purposes of this subchapter [44 USCS §§ 3501 et seq.] are to – (1) minimize the paperwork burden for individuals, small businesses, educational and nonprofit institutions, federal contractors, State, local and tribal governments, and other persons resulting from the collection of information by or for the federal government”) (emphasis added). To impose on educational institutions the burdens of meeting governmental statistical standards in the course of carrying out a PRA burden review turns the PRA on its head.

Neither the OMB nor any other federal agency imposes any particular response rate for the RGEES Survey. In fact, despite its suggestion that OMB Guidance requires the 50 percent rate, in response to comments, ED admits “[f]ifty percent is not the Federal standard for a minimum survey response rate.” ED Responses to Public Comments on RGEES Survey and Standards at 10 (www.regulations.gov). And the OMB Guidance, upon which ED has relied to support its use of the 50 percent rate, states that the “Paperwork Reduction Act does not specify a minimum response rate.” OMB Guidance at 59.

Instead, OMB instructs federal agencies to “strive to obtain the highest practical rates of response, commensurate with the importance of survey uses, respondent burden, and data collection costs.” Id. at 60. Thus, OMB suggests a practical, evidence-based approach. OMB requires ED to provide “complete descriptions” of how it determined the appropriate response rate. Id. at 61. In arriving at its conclusion, OMB suggests considering “past experience with response rates when studying this population, prior investigations of nonresponse bias, plans to evaluate nonresponse bias, and plans to use survey methods that follow best practices that are demonstrated to achieve good response rates.” Id.

It appears ED considered none of these factors. Instead, ED selected the response rate based solely on the requirement in the GE regulations that institutions submitting state-sponsored alternate earnings appeals obtain earnings data for more than 50 percent of the students in the GE cohort. See 34 C.F.R. § 668.406(d)(2). In fact, ED’s only explanation of the 50 percent threshold is found in a brief footnote to its RGEES NRBA Supporting Analysis at 1, n. 1:

The 50 percent cut point was set for consistency with the other alternative earnings source that is allowable under the GE regulations. Specifically, the regulations require the availability of earnings data from state employment records for at least 50 percent of the students in the program’s cohort of graduates.

Thus, ED did not consider past experience with response rates when studying the applicable population or prior investigations of nonresponse bias; it simply adopted the 50 percent standard found in the regulations for the state-sponsored data system appeals.

Had ED considered past experience, it likely would have informed itself about the extraordinary difficulty of obtaining responses from individuals regarding income, particularly where the survey is not originating from a federal agency. For example, the OMB Guidance acknowledges that response rates have declined overall in the past few years and more substantially for non-government surveys:

More recent, but less systematic observations suggest that response rates have been decreasing in many ongoing surveys in the past few years. Some evidence suggests these declines have occurred more rapidly for some data collection modes (such as RDD telephone surveys) and are more pronounced for non-government surveys than Federal Government surveys.

Generally, these declines have occurred despite increasing efforts and resources that have been expended to maintain or bolster response rates.

OMB Guidance at 60 (emphasis added). Likewise, the U.S. Census Bureau has made the same observation:

Surveys conducted under the aegis of the federal government typically achieve much higher levels of cooperation than non-government surveys (e.g., Heberlein and Baumgartner 1978; Goyder 1987; Bradburn and Sudman 1989). census.gov.

In fact, the American Association for Public Opinion Research (AAPOR), the organization OMB encourages federal agencies to consult in calculating and reporting response rates (OMB Guidance at 57), has not only observed the marked decline in response rates overall, it goes so far as to question the precept that there is a positive association with response rates and data quality. AAPOR: “Response Rates – An Overview” (aapor.org). “At the same time, studies that have compared survey estimates to benchmark data from the U.S. Census or very large governmental sample surveys have also questioned the positive association between response rates and quality”.

ED’s supporting documentation provide no indication that ED considered past experience with the target population, the great decline in response rates in general, the tendency for income survey recipients to be less inclined to respond to a non-governmental survey, and the recent data questioning the presumption that there is a positive association with response rates and data quality. ED’s oversight in this regard provides the basis to seek ED’s reconsideration of its 50 percent response rate.

ED’S use of absolute values to estimate relative bias

To address the “serious problem” of nonresponse bias, the proposed RGEES Standards require that institutions submitting an earnings survey appeal carry out (or allow the RGEES Platform to carry out) a nonresponse bias analysis to “indicate the potential impact of nonresponse bias” if its response rate is between 50 and 80 percent. RGEES Standards at No. 6. Nonresponse bias analysis is a mechanism to ascertain the effect of missing data in a survey. According to ED, if respondents differ in earnings status with non-respondents, then the results of the survey could be misleading. To address such potential non-response bias, the RGEES Standards require that respondents and non- respondents be compared based on whether they have attributes, which ED states are correlated with low earnings. ED identified these attributes as being a Pell recipient, having a 0 EFC, and being female. Commenters expressed concern about whether these attributes are, in fact, correlated to earnings, given that ED repeatedly denied in the GE rulemaking and in the subsequent litigation that these factors are determinative of outcomes on the annual earning rate. See, e.g, 79 Fed. Reg. at 64,910; ED Memorandum in Support of Motion for Summary Judgment, APSCU v. Duncan (1:14-cv-01870-JDB, U.S. District Court, Washington DC) (March 6, 2015) at 21-23.

ED has explained that its analysis reveals that while these factors are not correlated with DE rate performance, it did show negative correlations between earnings and these demographic factors. Specifically, ED stated that its data showed “the zero-order correlations between earnings and percent ZEFC, percent Pell, and percent female are -.593, -.611, and -.267, respectively.” ED Responses to Public Comments on RGEES Survey and Standards (regulations.gov).

Thus, according to ED’s analysis, if graduates with these attributes are over- represented in survey responses, the earnings results will be biased lower and if they are under-represented the results will be biased toward higher earnings. However, the Standards require the consideration of the average “absolute value” of relative bias, rather than just average relative bias. The Standards explain how average relative bias is calculated as follows:

The average relative bias due to nonresponse, computed as the average of the absolute value of the relative bias due to nonresponse measured for each of the three attributes examined, is used to measure the relative bias due to nonresponse present in a set of the RGEES data. Id. (emphasis added).

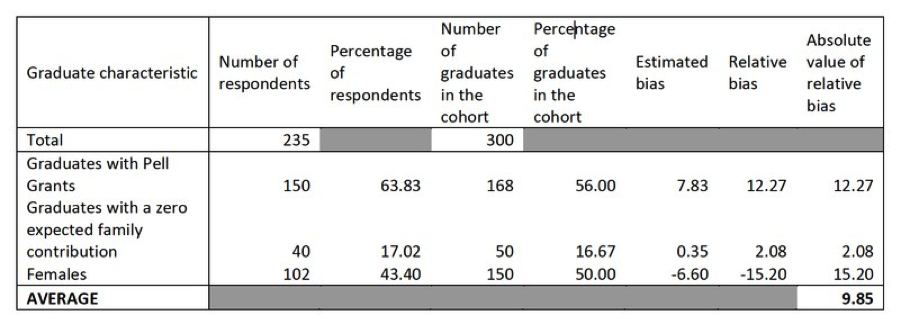

What this means in practice is that even where survey appeal responses are weighted disproportionately with Pell recipients, 0 EFC graduates, and/or female graduates, ED will reject an institution’s survey if the average absolute value of the relative bias exceeds 10 percent. The chart contained in the RGEES Best Practice Guide, demonstrates this concept:

Best Practices Guides: Recent Graduates Employment and Earnings Survey at 32.

In the example provided in the chart, the hypothetical institution has 12.27 percent more Pell respondents than in its program cohort, 2.08 percent more 0 EFC graduates than in its program cohort, and 15.20 percent less females than its program cohort. Yet ED’s use of absolute values treats the over-representation of these graduates whose status as Pell recipients and 0 EFC status biases their earnings downward, the same as the under-representation of females. This is particularly illogical when one bears in mind that according to ED’s own analysis, the negative correlation between Pell and earnings and 0 EFC and earnings is more than double (-.611 and -.593 respectively) the negative correlation between being female and earnings (-.267). Thus, in the chart’s example, if the average absolute value had exceeded 10 percent, ED would reject the survey even if the reported earnings were sufficient for the institution to meet the debt to earnings thresholds. In other words, ED does not consider the direction of bias, only the magnitude of it regardless of its direction.

If a nonresponse bias analysis is required, one would expect that ED would net the negative and positive biases rather than using absolute values and only reject surveys where the net average relative bias negates the reliability of the survey earnings. It is unclear why ED is requiring the use of absolute value of relative bias as it is not mandated by OMB Guidance, the publication on which ED has stated it relied for the RGEES Standards. Even the NCES, which designed these Standards, has acknowledged the relative insignificance of absolute values of bias.

There are different ways to evaluate bias. The absolute value of a bias does not provide much information on the impact of the bias on estimates. J. Bose, “Nonresponse Bias Analyses at the National Center For Education Statistics,” Proceedings of Statistics Canada Symposium (2001).

In fact, the use of absolute values is useful only if the magnitude but not the direction of bias is relevant. Bruno A. Walther and Joslin L. Moore, “The Concepts of Bias, Precision and Accuracy, and Their use in Testing the Performance of Species Richness Estimators, With A Literature Review of Estimator Performance,” ECOGRAPHY 28: 815-829, 2005 (The use of absolute values for “directionless” measurement of bias “is useful if only the magnitude and not the direction of bias is of interest”).

When an institution presents alternative earnings data for the GE metrics to challenge SSA’s earnings data, the direction of any bias is not only of interest, it is critical to assessing whether SSA has under-reported graduate earnings. The use of absolute values of relative bias disregards direction of bias and in this way ignores the entire purpose of the GE earnings survey appeal. If an institution can show earnings sufficient to meet the GE debt to earnings rate threshold even where the responses are biased down, one would assume ED would, at a minimum, accept the survey results. In cases where the results are biased downward but an institution does not meet the GE debt to earning threshold, it stands to reason that ED would determine a method of imputation of earnings or other statistically valid adjustment to make up for the bias.

ED provides no reasoned basis for requiring that the relative bias not exceed 10 percent

Section 6.2 of the final RGEES Standards states that the average relative bias may not exceed 10 percent in order for an earnings survey to be successfully used in a GE alternative earnings appeal. However, ED nowhere justifies this 10 percent relative bias threshold. In its response to comments, ED references a supporting analysis, which purports to carry out complex, multi-staged analyses to ascertain what should be the acceptable level of relative bias. RGEES NRBA Supporting Analysis at 7-11 (regulations.gov)1.

First, with its “What If” Analysis 1, ED states that it took data from three programs derived from the 2012 GE Informational Rates, which relied on 2011 Calendar Year earnings. Id. at 2. Each of these three programs was at or near the mean for one of the three variables used to calculate nonresponse bias (Pell, 0 EFC, female). ED then plotted out estimates of relative bias and relative distance for each of these three variables based on response rates of 50, 60, 70, and 80 percent and assumed varying sizes of the difference from the mean of increasing and decreasing percentage points (1, 2, 3, 4, 5, 6, 7, 8, 9, 10, 15, 20). See id. at 18-21 (Figures 1-3). ED also plotted out the same for the data averaged across all three variables. Id. at 22 (Figure 4).

Although ED’s Supporting Analysis purports to show how response rates for each of the three variables uniquely affects relative bias, ED’s results are identical for each of the three variables and, of course are also identical when it averaged the data for all such variables. Id. at 18-22. In each case, when looking at the results with a 50 percent response rate, a relative difference of 20 percent is associated with a relative bias of 10 percent. The results are identical because this just reflects a mathematical truism – at a 50 percent response rate and a relative difference of 20 percent, the relative bias will always be 10 percent. (10%= 50 percent x 20 percent). This tells us nothing about why 10 percent should be the acceptable threshold for relative bias. In fact, ED admits that really the only conclusion to be made from its data plotting in its “What If” Analysis 1 is that “bias due to nonresponse is a function of the response rate and the difference in the relevant characteristics of respondents and nonrespondents.” RGEES Id. at 8. This is a known statistical principle for which manipulation and plotting of the 2012 GE Informational earnings data was unnecessary unless to create the illusion of serious analysis.

ED’s “What If” Analysis 2, likewise, presents as the product of an elaborate exercise resulting in colorful charts that show no apparent justification for the 10 percent relative bias threshold. For “What If” Analysis 2, ED charted out by response rate (50, 60, 70, and 80 percent) the distribution of average relative bias by absolute difference from the known population values drawn from the 2012 GE Informational data. Id. at 23-26. In each chart, ED shows by the specific response rate the percentage of the GE programs (with cohorts over 30) that have average relative bias of 0 to 5 percent, 5 to 10 percent, and over 10 percent. See id. Unsurprisingly, the charts show that the lower the response rate, the greater the number of programs with an average relative bias in excess of 10 percent. See id.

What these charts do not show is why 10 percent is the threshold ED selected to be examined for average relative bias or why it should be the required threshold for the RGEES Surveys. Nonetheless, ED concludes from this “What If” Analysis 2, that the relative bias value of 10 percent should be a requirement of an acceptable RGEES Survey:

Our review of these distributions, coupled with the reported expectation that the accepted response rates are likely to be close to 50 percent, led to the addition of the criteria of relative bias value of less than 10 percent. Id. at 11.

One would expect that ED would provide some justification for the 10 percent relative bias threshold. Instead, ED’s supporting documentation merely illustrates that 10 is the percentage of relative bias that results when there is a relative difference of 20 percent and a response rate of 50 percent and that the lower the response rate, the more GE programs will have an average relative bias in excess of 10 percent. The omission of genuine analysis is particularly glaring given that ED’s “What If” Analysis 2 charted absolute relative bias and, therefore, did not consider the net relative bias, i.e., the instances where there may have been an over-representation of students with one of the three observed attributes (Pell, 0 EFC, and female), which ED has stated is negatively correlated to earnings.

ED’s failure to justify the 10 percent relative bias threshold is also troublesome given the fact that even the NCES acknowledges that the magnitude of relative bias does not necessarily inform us on statistical confidence.

Comparing the magnitude of bias to the survey statistics: A simple way to look at the bias is to compare it with the survey statistic. Calculating such a relative bias allows for comparisons across different survey estimates. This does not, however, provide information on the bias relative to the confidence one has on the statistic based on the standard error. However, surveys do calculate a mean ‘relative bias’ value based on the mean of multiple relative bias values.” J. Bose, “Nonresponse Bias Analyses at the National Center For Education Statistics,” Proceedings of Statistics Canada Symposium (2001) (emphasis added).

In other words, NCES posits that even were ED to calculate and justify a proper relative bias threshold, this does not mean that exceeding the bias threshold significantly lowers the confidence level of the survey results.

Moreover, ED is requiring that the average relative bias be averaged over three characteristics (Pell, 0 EFC, and female), two of which are positively correlated with each other and could result in an over-estimated relative bias. That is, all students with 0 EFC will presumably also be Pell recipients.

Another significant matter that ED has failed to consider in setting the threshold for acceptable relative bias is the known fact that there is a tendency of survey respondents to underestimate their income. According to the U.S. Census Bureau “there is a tendency in household surveys for respondents to underreport their income.” census.gov. This concern is echoed in a 1997 study carried out by researchers at the U.S. Census Bureau and the U.S. Bureau of Labor Statistics. (“The response error research . . .suggests that income amount underreport[ing] errors do tend to predominate over overreporting errors, but that even more important factors affecting the quality of income survey data are the underreporting of income sources and often extensive random error in income reports”). J. Moore, L. Stinson, and E. Welniak, Jr., “Income Measurement Error in Surveys: A Review,” p. 2.

Finally, as ED admits, its entire “analysis” to assess the appropriate relative bias threshold was based on the presumption that the acceptable response rate would be 50 percent, a rate not adequately supported in its analysis as explained above.

Thus, a close examination of ED’s justification for its 10 percent relative bias threshold reveals that ED first set the 10 percent threshold and then illustrated various scenarios based on that predetermined threshold. This window dressing approach simply does not substitute for an honest evaluation of the appropriate bias threshold.

No allowance for institutions to impute earnings for missing respondent data

In its analysis, ED explains that it will not permit institutions to carry out any “nonresponse adjustments” in their RGEES Surveys. Id. at 8-9. ED provides two reasons for this decision. First, it states that nonresponse adjustments are typically used in sample surveys and that the RGEES Survey is a universe survey. Id. Second, ED justifies its decision stating that nonresponse adjustments are not appropriate for “high stakes” reporting environments and it deems the RGEES Survey to be a “high stakes” reporting environment. Id.

However, ED carries out its own nonresponse adjustment when it imputes zeros for missing SSA income data. If retrieving earnings data for the GE metrics classifies as a “high stakes” endeavor and if nonresponse adjustments are categorically inappropriate for universe data collection efforts, then one would assume ED likewise would not use imputation. However, if nonresponse adjustments are appropriate in this context, then institutions should also be permitted to carry out such adjustments to account for its own missing data. Accordingly, ED should reconsider its own practice of imputation and consider permitting institutions to impute or carry out weighting adjustments to address missing earnings data.

ED has failed to adequately justify its refusal to consider the impact of its own missing SSA data and its imputation of zeros

The proposed RGEES Standards mandate a bias analysis of missing data, i.e., earnings from non-respondents, but ED has refused to consider the potential bias in its own earnings resulting from it assigning zeros to graduates in the GE cohorts for whom SSA reports no earnings data. This refusal calls into question the credibility and integrity of the SSA earnings data. Specifically, imputing zeros biases SSA’s earnings reports particularly for self-employed graduates and those with significant tip income.

In its response, ED maintains that a nonresponse analysis is unnecessary, citing the fact that in its previous SSA earnings extractions, conducted from July to December 2013, it retrieved earnings data from more than 80 percent of the targeted graduate population. ED Response at 13 (regulations.gov). With a “response rate” in excess of 80 percent, ED asserts that it is unnecessary to carry out a nonresponse bias analysis. See id.

However, ED entirely disregards the import of carrying out an analysis of the effect of its imputation of zeros. ED is not excluding the missing SSA earnings data and calculating the mean and median earnings of graduates from GE programs knowing that it has reached a level of confidence because it has retrieved a rate of response in excess of 80 percent.

It is assuming that in every case where it can find no data about the earnings of a graduate, that graduate earned no income in the calendar year considered.

Where ED knows that it may expect that it will not be able to obtain SSA earnings data for over 11 percent of the graduates, it has a duty to assess the impact on the quality of its data when it imputes zero income for each of these graduates.

The practice of imputing zeros where data are missing is categorically and widely rejected by accepted statistical principles. In its own survey standards, NCES uses 20 different methods of imputation. Not one of these methods includes imputing zeros where data are missing. NCES Statistical Standards, App. B “Evaluating The Impact Of Imputations For Item Nonresponse” (nces.ed.gov). ED’s refusal to consider the impact its zero income imputation has on the quality of the SSA earnings data or even to explain its refusal subjects it to being labeled arbitrary and capricious and contrary to its legal obligations under the Administrative Procedure Act. See Del. Dep’t of Natural Res. & Envtl. Control v. EPA, 785 F.3d 1, 16 (D.C. Cir. 2015) (failure to “respond to serious objections” is “arbitrary and capricious”). This is another reason that ED should carry out a serious and statistically valid analysis of the impact of its zero income imputation practice.

Conclusion

The validity of the GE DE rates depends on the validity of the earnings data used in the metrics. Unfortunately, ED’s regulatory scheme relies on SSA data that are entirely unverifiable even by ED. The integrity and veracity of ED’s DE measurements depend on the existence of a method of challenging that data that is sound, logical, and evidence-based. Because the earnings survey appeal is the only alternative earnings appeal available to institutions in all states, if it does not meet this standard, there simply is no universally available fair earnings appeal process.

ED has followed its Paperwork Reduction Act responsibilities in form but not in substance. It has drafted detailed survey standards. It has submitted its survey standards for public scrutiny twice as required by statute. It has published a detailed supporting analysis replete with complex algorithms and charts. It has not, however, provided a factually and legally supportable analysis to allow for a rational response rate, a fair relative bias threshold, and a mechanism to adjust earnings data to account for missing data. In the words of the late David Bowie: “Don’t fake it baby, lay the real thing on me.”

Yolanda Gallegos , founded the Gallegos Legal Group, which is a national education law practice, representing colleges and universities around the country. Gallegos Legal Group specializes in higher education regulatory compliance and risk management in areas associated with federal student aid, student grievances, civil rights laws, and contract law.

Yolanda has successfully defended dozens of institutions in program reviews and audits before the U.S. Department of Education and she has extensive experience in accreditation, and state licensing.

Thomson-Reuters recently published her chapter on the recent Violence Against Women Act regulations in Inside the Minds: Emerging Issues in College and University Campus Security. Also , AudioSolutionz will release her book, Gainful Employment Regulations: The Metrics Reloaded.

Yolanda received her J.D. in 1986 from the University of New Mexico School of Law and her LLM in Advocacy from Georgetown University Law Center in 1989. She is a member of both the District of Columbia and New Mexico Bars.

Contact Information: Yolanda R. Gallegos // Attorney at Law // Gallegos Legal Group // 505-242-8900 // yolanda@gallegoslegalgroup.com // gallegoslegalgroup.com